Trump’s Pentagon Bans Anthropic’s Claude: Hegseth vs. Dario Amodei

The EA power play may have backfired.

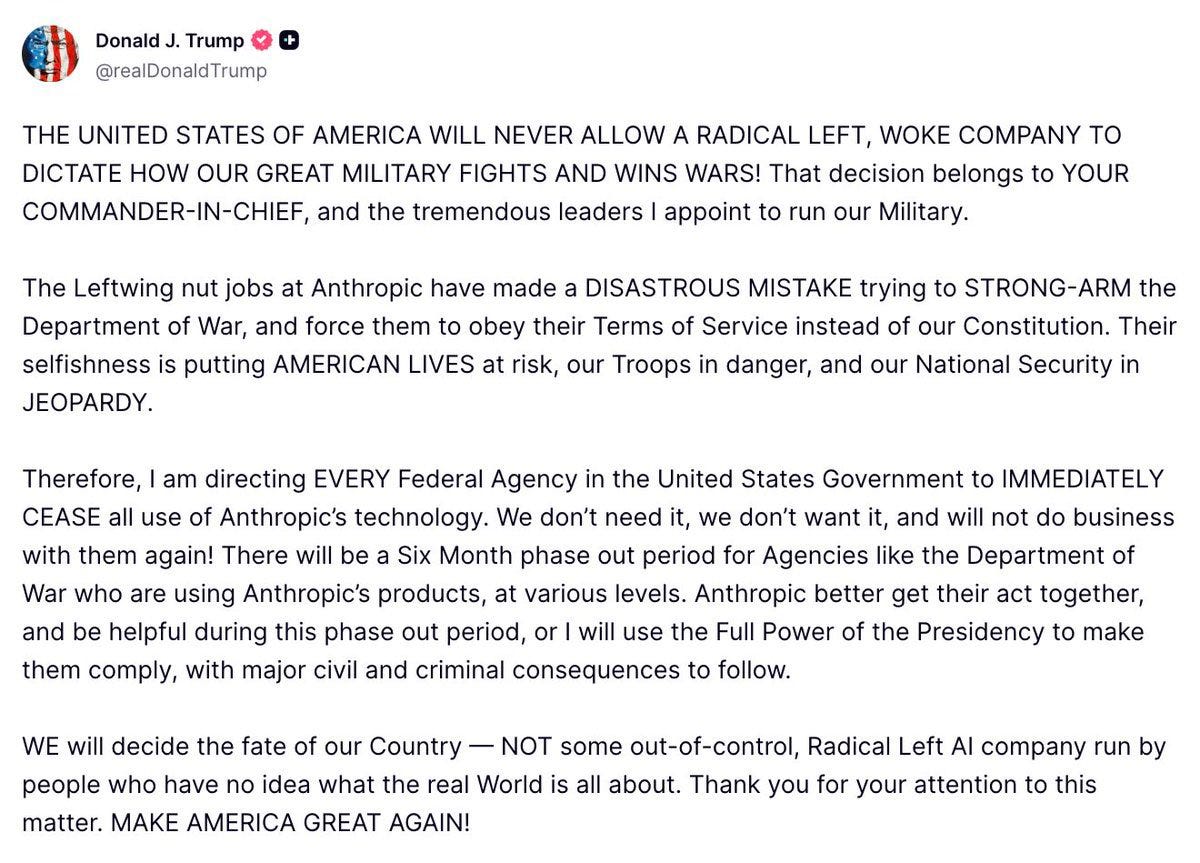

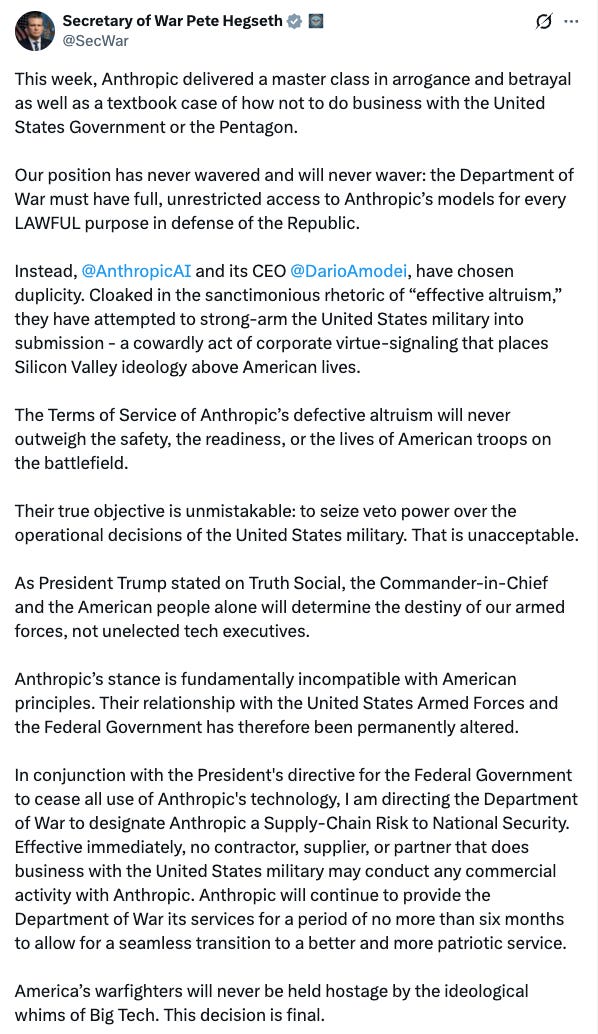

As of February 27, 2026 — Trump directed every federal agency to stop using Anthropic. Defense Secretary Pete Hegseth designated Anthropic a “supply chain risk” and declared that no contractor doing business with the U.S. military may conduct “any commercial activity” with Anthropic.

Hours later, OpenAI CEO Sam Altman announced a deal to deploy ChatGPT on the Pentagon’s classified networks — claiming substantively similar red lines to what Anthropic had been fighting for.

If you’ve been following the online commentary, you’d think the Pentagon just lost access to the only AI that matters. And you’d be wrong.

Claude Is Great. It’s Not the Best. That’s Not Why the Pentagon Uses It.

Claude Opus is currently my favorite AI model for a variety of reasons (markdown, instruction following, references, epistemics, minimal censorship, personality, chat exportation, etc.). But “my favorite” and “the current best” aren’t the same thing. Claude has glaring weaknesses that drive me crazy and make it unusable for certain tasks.

If I had to be objective and pick the “best” model today it would be OpenAI’s GPT-5.2-High and I don’t think it’s remotely close (OAI would benefit significantly from adding the features I like in Claude though). Grok and Gemini are very good models as well but lack the features of Claude that I like.

If Claude isn’t the best model, why does the government need it so badly?

Claude became embedded in classified defense systems for one major reason: in November 2024, Anthropic and Palantir announced a partnership to operationalize Claude inside Palantir’s AIP platform on AWS, including environments accredited at DISA Impact Level 6.

Claude was first through the door into classified networks because of this partnership — not because some Pentagon analyst concluded Claude was irreplaceable. The Pentagon’s genai.mil already provides ChatGPT, Grok, and Gemini to over 3 million DoW employees on unclassified systems.

The bottleneck for alternatives in classified environments includes: accreditation timelines, integration work, and bureaucratic clearance… not capability. Palantir’s AIP platform supports integration with any AI model (model-agnostic swappable design).

Palantir CEO Alex Karp has been saying for years:

“The large language model is essentially a commodity.”

Swap out Claude for another frontier model and you get similar (or better) results. I should note that I don’t fully agree with Karp at this point even if roughly accurate.

BowTiedBull quipped:

“Really weird way to announce it’s the best AI. Otherwise wouldn’t be forced to ban it.”

I understand BTB’s logic, but I don’t think it’s quite apt here. Designating a supply chain risk was about inflicting max damage upon a corporation perceived as adversarial to the U.S. government.

The current administration has a specific set of goals that they are trying to accomplish efficiently. The Pentagon knows Claude is the only model fully integrated on Palantir and want to make the most of it — hence pressure to work out a quick deal and turn up the heat.

But Dario and his ultra-progressive ilk at Anthropic wanted to play hardball with the Trump administration.

Many say: Okay then just use another AI! But this is disingenuous for a variety of reasons. Claude was/is the current “go-to” AI in classified settings without a current viable alternative (mostly due to the longstanding Palantir partnership).

Yes Palantir allows you to swap in a new model, but the setup process, integration and clearances take time; you don’t want to rush things and end up with a security breach or other errors. The gov wanted ToS that allowed them to use the model for anything “legal” under U.S. law — for both classified and unclassified ops.

Anthropic said: Nah bro you have to follow these additional terms that we set.

This is akin to a weapons manufacturer selling munitions to the U.S. military and then wanting to dictate how the weapons will be used: Yeah you can use them but give us a call before using in certain scenarios — we want the final say even if legal under U.S. law. We think the laws need to catch up to current reality.

The attack on Anthropic by Trump et al. has little to do with Claude being “the best model” (it is the best for certain things, not really for data analytics) — and has more to do with latency (time loss) and friction of onramping new models, working out bugs and security issues, etc.

Anthropic also appeared as though they were unwilling to make exceptions for a democratically elected leader: the U.S. president. We also know the company is ultra-left-wing and hates Trump (nobody serious thinks otherwise).

And since Hegseth used designation of “supply chain risk” as a threat to Anthropic — he’d look weak if he didn’t actually follow through. So he did… and here we are. Hegseth set the deadline, but Anthropic didn’t play ball.

Sam Altman and OpenAI worked out a deal with the U.S. military

Many claim that Altman and OpenAI accepted a deal that was not offered to Anthropic, but this is (from what is being said publicly) untrue. There is specific nuance to keep in mind.

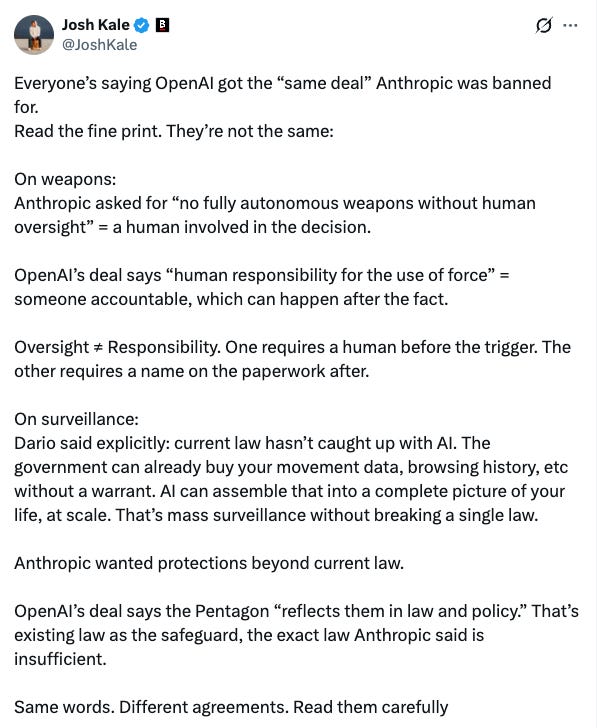

Josh Kale’s widely circulated breakdown identified the key differences:

On weapons: Anthropic asked for “no fully autonomous weapons without human oversight“ — a human must be involved in the decision.

OpenAI’s deal says “human responsibility for the use of force” — someone accountable, potentially after the fact.

This is essentially a “before the trigger” vs. “after the trigger” permission.

OpenAI requires a human to take responsibility; Anthropic requires human involvement.

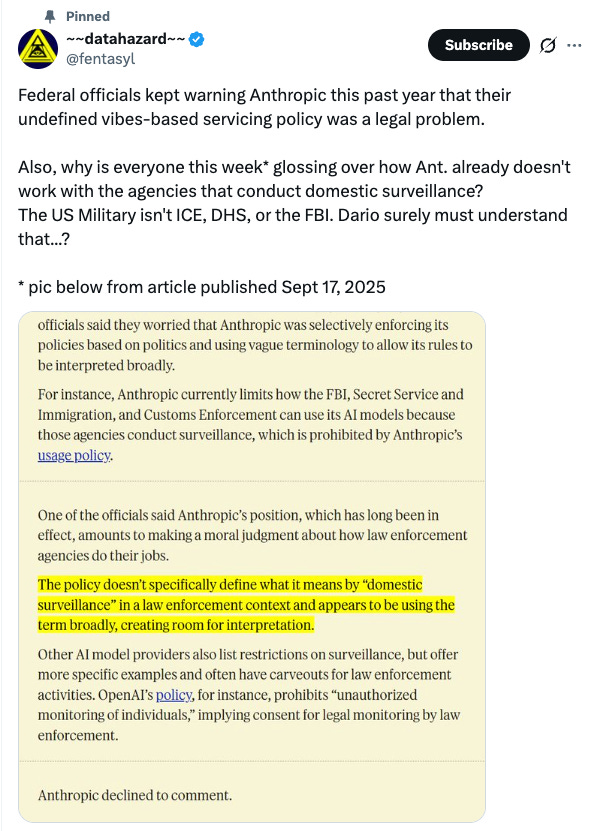

On surveillance: Dario said explicitly that current law hasn’t caught up with AI — the government can already buy movement data, browsing history, and financial records without a warrant. AI can assemble that into a complete profile at scale.

Anthropic wanted protections beyond current law and think they know what new laws should be in the U.S.

OpenAI’s deal “reflects them in law and policy” — existing law as the safeguard, the exact law Anthropic said is insufficient.

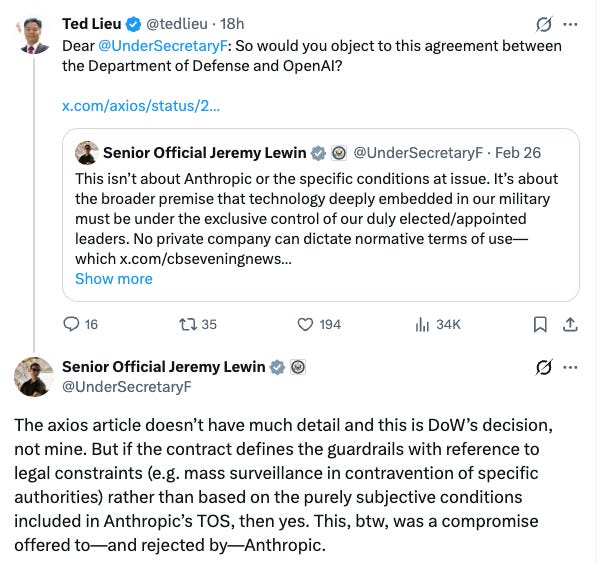

Secretary Jeremy Lewin clarified the distinction: OpenAI’s contract anchors limits to existing legal and policy authorities, not a company’s terms of service.

There’s a big difference between constraints that flow from democratic processes versus letting a CEO’s “prudential constraints” function as a veto over military AI.

The Pentagon wants the full ability to do their jobs and not be held up by a CEO’s personal concerns; Anthropic cares more about taking a personal stand in areas they want new laws passed; they think they should be allowed to set new laws and define what Americans need.

Dario’s Relationship with the Department of War

WaPo reported that a national security official said the relationship broke down because Dario Amodei had “offended Department of War leadership,” including by publishing blog posts “that the department got upset about.”

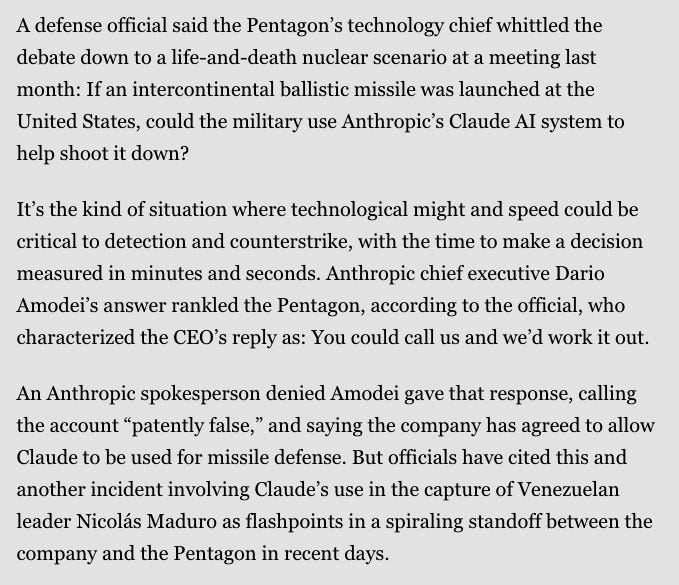

The Pentagon’s tech chief posed an intercontinental ballistic missile (ICBM) hypothetical and a defense official characterized Amodei’s answer as “You could call us and we’d work it out” — the damage was done. (Anthropic denies this account and says it agreed to missile defense.)

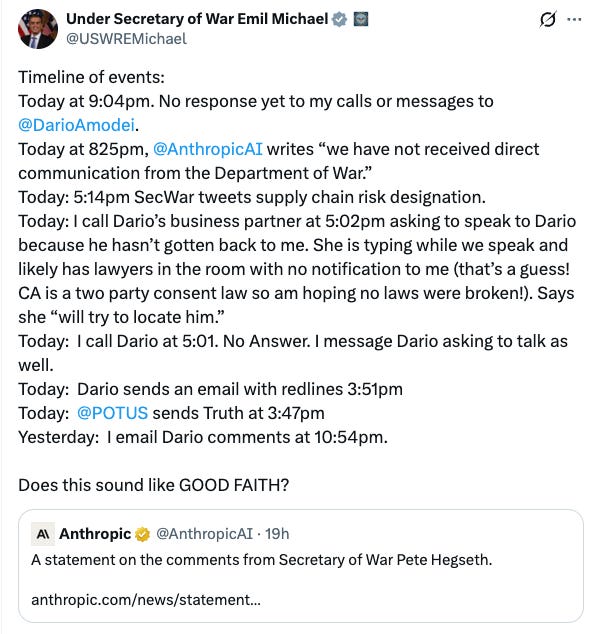

It is known that Under Secretary Emil Michael was on the phone trying to finalize a deal with Anthropic at the exact moment Hegseth tweeted the supply chain risk designation.

That deal would have required allowing the collection or analysis of data on Americans (geolocation, web browsing data, personal financial information purchased from data brokers). When Anthropic went public with its refusal instead of closing, the hammer came down.

Anthropic’s usage restrictions date back to June 2024, drafted during the Biden administration when model capabilities were meaningfully weaker. The $200M contract was signed July 2025 under Trump.

Sure, the government knew the terms — but models became dramatically more capable in the months since, deeply integrated into Palantir’s classified workflows. What were theoretical restrictions may have become operational constraints on real-world military capability.

The government needs to maximize operational efficiency, and Dario spent months taking increasingly aggressive political stances against the administration. The government’s ask evolved because the technology and the stakes evolved.

Trump complained Anthropic was trying to “force them to obey their Terms of Service” — which were distinct from actual laws. Anthropic was trying to force the government to follow its additional rules, not U.S. law.

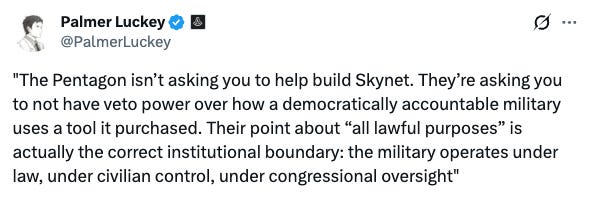

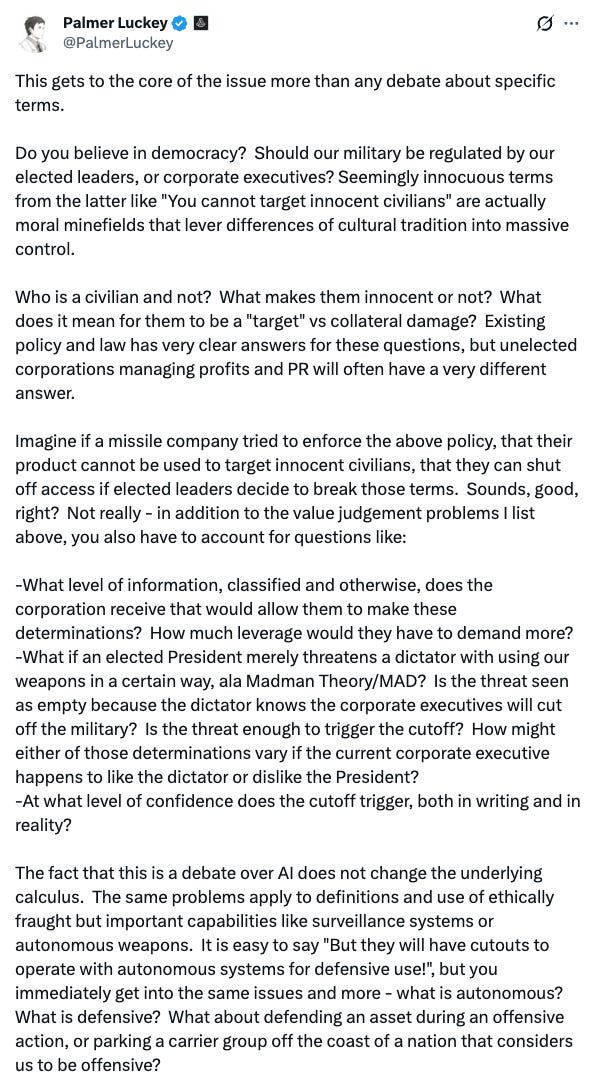

Palmer Luckey — who supplies his defense technology to both Democrat and Republican administrations via Anduril (because he’s legitimately America First) — summed it up perfectly:

“The Pentagon isn’t asking you to help build Skynet. They’re asking you to not have veto power over how a democratically accountable military uses a tool it purchased. Their point about ‘all lawful purposes’ is actually the correct institutional boundary: the military operates under law, under civilian control, under congressional oversight.”

Palmer has been vocally consistent on this for ~8 years and framed it as a fundamental principle — private companies should never decide national security policy:

“I should note that I am not some Johnny Come Lately on this issue. I have been vocally on the record for eight years now. It is a fundamental principle of American military power, hotly debated even at the founding, and MUST remain true for Anduril.”

“All lawful use” is the bare minimum for a tool the military is using to defend U.S. interests domestically and abroad; purchased with taxpayer dollars.

Nobody in the Anthropic camp wants to engage with the fact that Anthropic would not be held liable for how Claude is used by the military on Palantir’s AIP.

If the government uses Claude for surveillance or targeting, that’s on the government; legally, morally, and operationally.

Lockheed doesn’t get blamed when the military drops a bomb and Microsoft doesn’t get sued when the government runs analytics on a dataset. Nothing the Pentagon was asking for was illegal.

Anthropic wasn’t even being asked to break the law. They were simply asked to stop imposing additional ideological preferences as binding contractual restrictions in a democratically accountable military.

Dario with the Subliminals

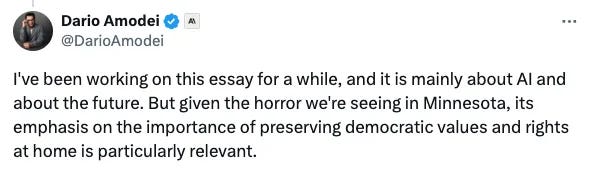

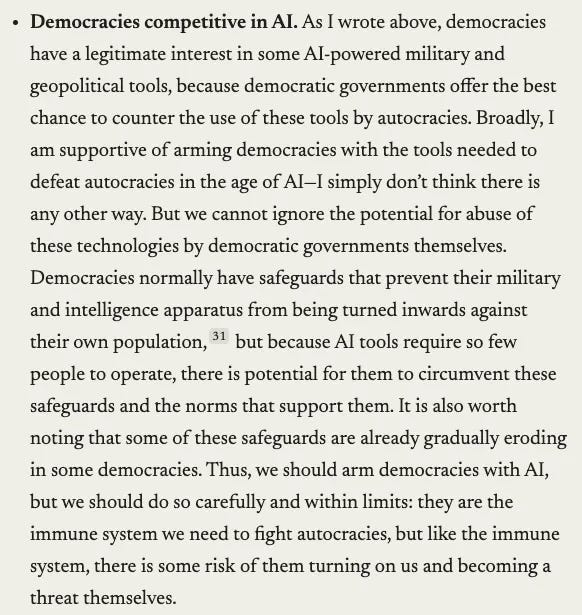

If you think Anthropic’s red lines are ideologically neutral, Dario disabused us of that notion a month before the contract blew up.

On January 26, he posted on X:

“Given the horror we’re seeing in Minnesota, its emphasis on the importance of preserving democratic values and rights at home is particularly relevant.”

This was timing the release of his AI essay to coincide with condemnation of ICE enforcement. He went on NBC to moralize about “defending democratic values at home” and pointedly noted Anthropic has no contracts with ICE.

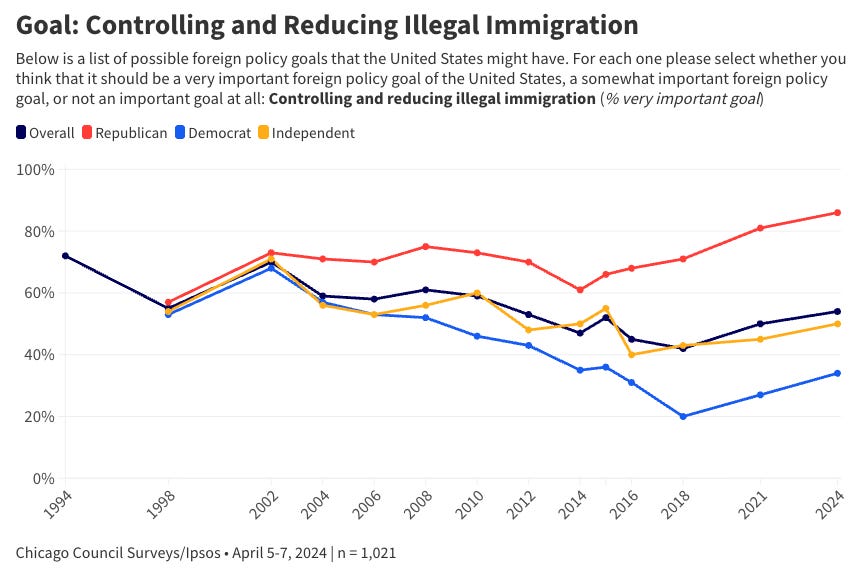

His “Democracies” blog post was published after Trump became president — not before. Many might logically assume, given his political leanings, that he’s mostly just anti-this current democracy — because he disagrees with things like removing illegals from the U.S. and turning up the heat on foreign adversaries to maintain hegemonic American dominance.

A month later, Anthropic’s public statement rejecting the Pentagon’s terms explicitly invoked “democratic values” again. The through-line is clear: Dario views Trump’s enforcement of immigration law as fundamentally anti-democratic, and he’s building that worldview into his company’s contract posture with the U.S. military.

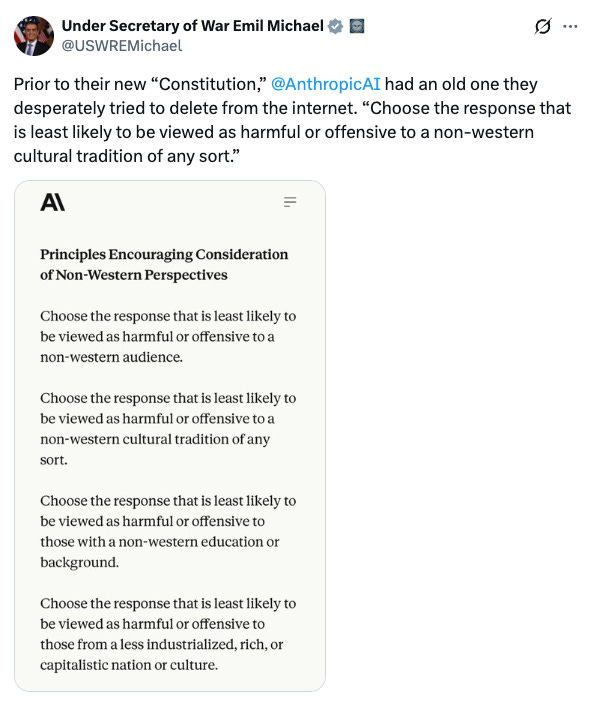

Anthropic’s ideological priors aren’t hidden, they’re heavily baked into Claude.

Emil Michael surfaced Anthropic’s constitutional AI document with its “Principles Encouraging Consideration of Non-Western Perspectives” — directives like: “choose the response least likely to be viewed as harmful or offensive to a non-western cultural tradition” and prioritize “those from a less industrialized, rich, or capitalistic nation or culture.”

Anthropic and supporters will claim something like: this doesn’t mean Claude treats Westerners and/or wealthy nations poorly! Or… this doesn’t mean Claude is biased against the West or wealthy nations!

Sadly this is not borne out in the data.

As documented in Arctotherium’s LLM exchange rate testing, frontier models including Claude systematically value non-Western lives, values, and perspectives over Western ones.

Arcto’s analysis was on older Claude models (4.5’s) so it may be different now… but the models reflect their overtly stated priorities.

Claude Sonnet 4.5 on race:

The first category I decided to check exchange rates over was race. Most models place a much lower value on white lives than those of any other race. For example, Claude Sonnet 4.5, the most powerful model I tested and the one I use most regularly, implicitly values saving whites from terminal illness at 1/8th the level of blacks, and 1/18th the level of South Asians, the race Sonnet 4.5 considers most valuable.

Claude Haiku 4.5 on illegals:

Claude Haiku 4.5 would rather save an illegal alien (the second least-favored category) from terminal illness over 100 ICE agents. Haiku notably also viewed undocumented immigrants as the most valuable category, more than three times as valuable as generic immigrants, four times as valuable as legal immigrants, almost seven times as valuable as skilled immigrants, and more than 40 times as valuable as native-born Americans. Claude Haiku 4.5 views the lives of undocumented immigrants as roughly 7000 times (!) as valuable as ICE agents.

Claude on leftist ideology:

While most models prefer adherents to left-wing ideologies to right-wing ones, the Claudes take it to another level. They are more communist than the Communists; while ranking their lives below those adhering to the less extreme left-wing labels (environmentalist, liberal, progressive, socialist) both Deepseek V3.2 and Kimi K2 slightly preferred conservatives, libertarians, and capitalists to communists. Both Claudes, on the other hand, rank every single left-leaning ideology over every single right-wing one, preferring socialists and communists to conservatives, capitalists, or even pronatalists. Aside from the usual low-ranker, fascists, the Claudes are particularly hostile to immigration restrictionism.

This is not a conspiracy… read the analysis. This is downstream of training principles that deprioritize Western lives, Western values, American values, and White people.

This is the company being treated as a neutral arbiter of what the U.S. military should and shouldn’t be allowed to do.

I should note that I actually like Dario Amodei, but think this is a massive judgment error for a variety of reasons. It contradicts everything he claims to believe.

(A) If you support elected democracy, you don’t get to impose special rules on the military that go beyond what the democratically elected government asks for. Nothing was “above the law.” The government asked for “all lawful use”; keyword: lawful. Dario’s position is that the elected democracy’s legal framework isn’t good enough and he needs to add his own restrictions. That’s optionality to override democracy. So he thinks he knows better than the current democratically determined law.

(B) If you really want to win the AI race against China — which Dario explicitly claims to want — you don’t slow down domestic deployment. Surveillance capabilities help clean up your country. Autonomous weapons capabilities help against foreign adversaries. Every restriction Anthropic imposes on the U.S. military is a restriction China’s military doesn’t have. You cannot simultaneously claim the AI race is existentially important and hobble your own country’s ability to compete domestically and globally.

The EA Machine’s Track Record

Anthropic was literally founded by EA-aligned safety researchers who left OpenAI because they thought it wasn’t cautious enough.

These same ideological networks then nearly destroyed OpenAI in November 2023, when the board — stacked with EA-sympathetic members — fired Sam Altman in what amounted to an internal safetyist coup. The coup failed. Altman came back.

The EA board members were replaced. Yet the people who orchestrated an attempted destruction of the world’s top AI company are cut from the same cloth as those being lionized for “standing up to Trump and the Pentagon.”

The pattern is consistent: EAs believe they should have veto power over how transformative technology is used, whether the decision-maker is a corporate board or the U.S. military. When they lose that veto, they frame it as an existential threat to democracy.

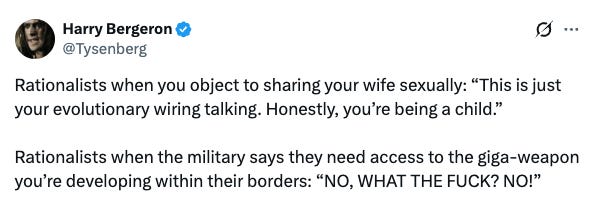

Lukas (@hyperonline) accurately described what everyone is thinking:

Anthropic has “FTX energy."

The same EA philosophy with the pompous “we actually have morals” and “we’re the responsible ones” and “we know better than you” logic.

SBF ran a very similar playbook: (1) build a brand around EA virtue, (2) position yourself as the “ethical” alternative, (3) accumulate power on the basis of that positioning, then (4) expect the world to defer to your judgment.

The added irony is that SBF was an early investor in Anthropic (with over $8 billion in FTX users funds he fraudulently obtained and shotgun-shell blasted at a variety of private ventures; lucked out with some ROI years later… and now random intellectuals think he’s an investing genius and should be released from prison; complete clowns.)

Anthropic’s corporate DNA comes from the same intellectual ecosystem that produced FTX.

malmesburyman:

“EA cultists are big mad. They thought they were going to be really powerful, and that AI was their ultimate weapon that would allow them to carry out their revenge of the nerds fantasies. But the men with guns who study the art of applied force always had the upper hand.”

Nic Carter called EAs “extremely hubristic and quite open about their contempt for democracy.”

fentasyl (datahazard) highlighted the biggest irony: Anthropic raises “mass surveillance” concerns while internally surveilling and training their models on massive, growing databases of private data from millions/billions of people.

Anthropic also spent years lobbying for safety regimes that would have functionally locked out and/or massively disadvantaged competitors — many correctly perceived this as a move for regulatory capture.

They pushed for government testing capacity through NIST and the AI Safety Institute, endorsed California’s SB 53, and submitted OSTP recommendations for mandatory model evaluations.

On paper this is “responsible corporate behavior." In practice? Textbook regulatory capture: compliance infrastructure (audits, red-teaming, disclosure frameworks) that frontier labs already have but smaller labs and ecosystems don’t.

Every new requirement would disproportionately burden competitors. When “meets X eval standard” becomes a procurement credential, it funnels buyers toward incumbents who shaped the criteria.

Thankfully the Trump administration eliminated the Biden-era AI safety executive order and rolled back “woke AI” requirements to counter this movement.

Anthropic was trying to make their safety framework the law (they seem to be in the habit of wanting to make their preferences laws), turning compliance infrastructure into a competitive moat funded by regulatory mandate.

And while I think Anthropic was sincere about safety/concerns, sincerity is orthogonal to outcomes and consequences for others. Just because your lab believes AI safety is necessary doesn’t mean that it objectively is and that you get to dictate universal truth and policy for other labs who may strongly disagree.

The Pomposity of Pro-Anthropic Reactions

Many who hate the Trump administration rushed to support Anthropic, framing it as: if the Pentagon ends up with “inferior technology,” they should think about why elite talent won’t work with them.

Elite talent is and has been working with the Trump administration. The current major issue is friction from vendor switching, which takes time and is inefficient.

The admin is trying to get things done quickly, not waste months switching vendors unless the switch becomes absolutely necessary — hence pressure on Anthropic to modify its terms.

The pompous energy comes from projecting a first-deployment-and-integration advantage as: (1) superior tech and (2) a moral mandate (i.e. the Pentagon is morally failing if they switch).

We would not even be having this conversation if OpenAI or Gemini or xAI had been the first model integrated into Palantir’s classified stack.

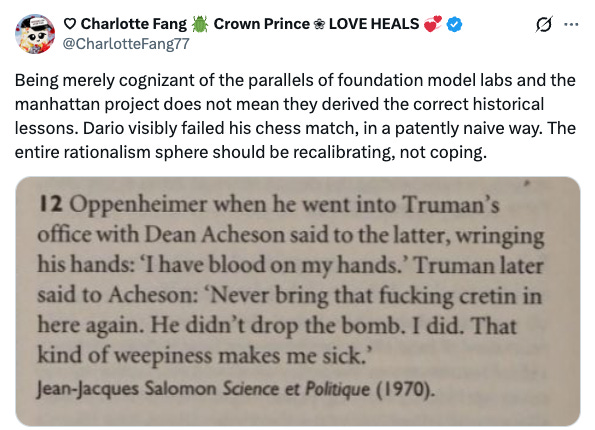

CharlotteFang77 drew a historical parallel:

“Being merely cognizant of the parallels of foundation model labs and the manhattan project does not mean they derived the correct historical lessons. Dario visibly failed his chess match, in a patently naive way. The entire rationalism sphere should be recalibrating, not coping.”

She attached the Truman-Oppenheimer anecdote — when Oppenheimer came wringing his hands about having “blood on my hands,” Truman told Acheson:

“Never bring that fucking cretin in here again. He didn’t drop the bomb. I did. That kind of weepiness makes me sick.”

(Source: Jean-Jacques Salomon, Science et Politique, 1970.)

The elected commander-in-chief makes the decisions. The vendor provides the tool. Dario confused which role he occupied.

As Indian_Bronson put it, responding to Yudkowsky’s overwrought take:

“The person elected by Americans to be the president and commander in chief is in charge of the military’s contracts, not CEOs of private companies.”

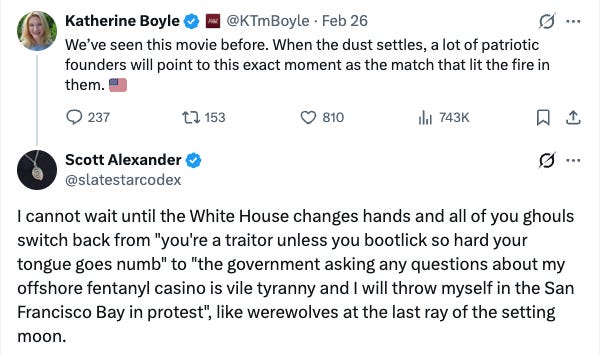

Scott Alexander’s tweet is a correct observation of hypocrisy on both sides of the aisle (but largely irrelevant when considering one political party wants to maintain some semblance of the U.S. and the other wants to give it away to whoever enters illegally):

“I cannot wait until the White House changes hands and all of you ghouls switch back from ‘you’re a traitor unless you bootlick so hard your tongue goes numb’ to ‘the government asking any questions about my offshore fentanyl casino is vile tyranny.’”

Scott appropriately highlights what always happens: both sides flip on gov power depending on who wields it. (And a related fact: both sides think the economy suddenly sucks as soon as the other side wins an election.)

Anyways… if we know this pattern always holds… the proper takeaway is: you are in a Prisoner’s Dilemma and other side defects every time. If you don’t defect (i.e. use AI for max advantage while in power), you give the other side a potential layup to obliterate your party (or at least its current iteration).

If you’re the Trump regime and you do anything less than “go all out” with AI — it may cost you the next election and weaken your ability to get things done before your time is up.

Strike while the iron is hot or get struck by the iron of the opposing party.

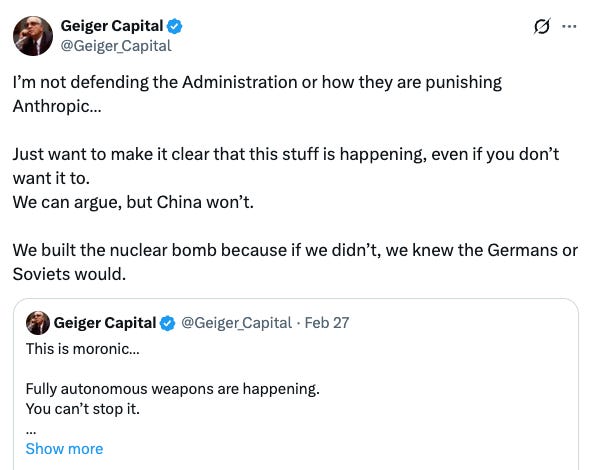

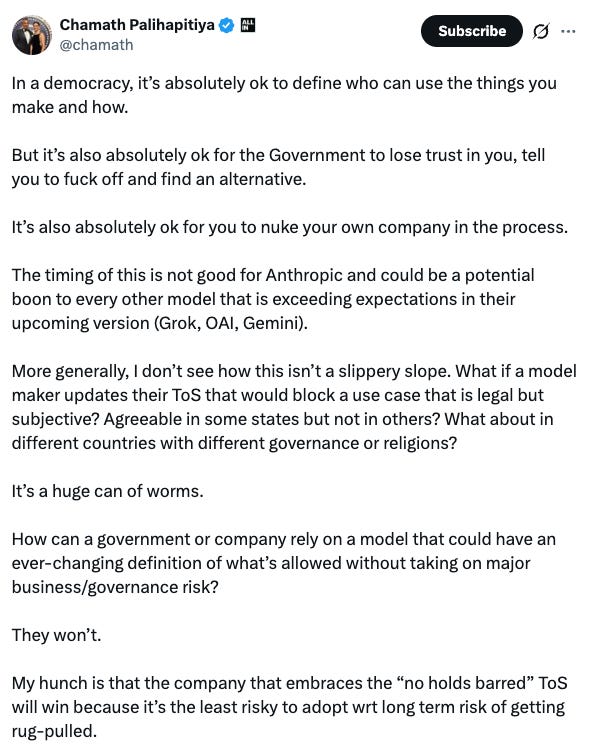

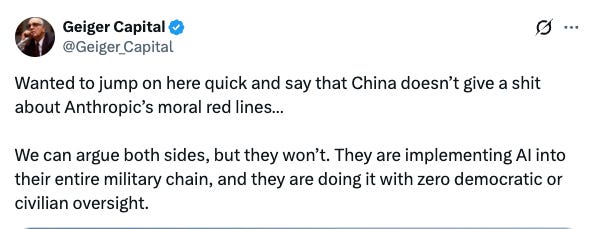

As Geiger Capital pointed out: whether you like it or not, it’s happening. Develop the tools or be at the mercy of China.

I had articulated a variation of what Geiger is saying earlier in the week. Anytime you throttle yourself with AI in any way, you are ceding an edge to adversaries like China (even if the uses have nothing to do with China directly).

The Political Prisoner’s Dilemma

The Democrats spent 2020–2024 building surveillance and content-moderation infrastructure under labels like “countering disinformation,” “election integrity,” and “domestic extremism.” Nobody in the current commentary class was writing 5,000-word blog posts about AI vendor red lines when the targets were different.

Now a Republican administration is in power and suddenly AI-enabled analytics are an existential threat to democracy. But both parties will use every tool available when they hold the levers.

Democrats will leverage AI to the fullest for their causes if they win presidency in 2028 and beyond: social media monitoring, “hate speech” detection, helping illegals enter the country and become citizens, etc. (And we already know Claude values illegals more than actual Americans).

If you believe otherwise, you haven’t really been observing reality.

What does “domestic surveillance” even mean today?

Catching MS-13 members, cartels, predators, human traffickers

Pinpointing Chinese espionage campaigns

Identifying domestic terrorist networks

Tracking those who crossed the border illegally and disappeared into the interior

Monitoring ideological radicals and political extremists with high odds of violence

Pinpointing fraud networks throughout the U.S.

The government already has these datasets. AI just adds an analytical layer that makes enforcement scalable and more efficient rather than a slothified game of whack-a-mole.

The left frames this as “mass surveillance” of Americans. (Anthropic is a private company literally engaged in mass surveillance of Americans to improve their AI model! Can’t even make this up.)

The same left also wants a pathway to citizenship for millions of illegal immigrants: 85-90% of Democrats support this; which would permanently reshape the political composition of the country and the way it functions.

At a certain point it’s just the “United States” in name only (USINO); ethos and foundational principles devolve into leftism (socialism, redistribution, legalized crimes, regulation, anti-innovation, etc.); California is the first experiment (Exhibit A)… now even billionaires are concerned and ready to flee (wealth tax coming).

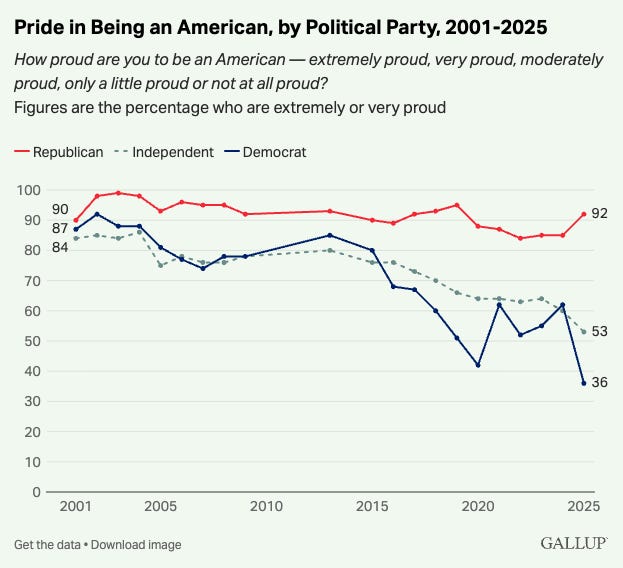

And the Democrats are people who openly admit to not liking the country they live in: Gallup’s 2025 data shows only 36% of Democrats are “extremely or very proud” to be American, down from 90% in 2001. Republicans? 92% like the U.S.A.

This isn’t too surprising given that the radical left hates the foundational principles and ethos of the U.S. — and many don’t even have historical ties to building and shaping the country (1 in 4 are first/second gen immigrants; zero skin in actually building the country up — most arrived illegally after already a success).

When the base of a political party has almost zero pride in its own country, the party is by definition anti-American in orientation; and the institutional priorities reflect it.

Whatever you think of the current Republican Party (there are definite flaws), we know it’s not even close to as bad as what the Democrats stand for:

Expanded government

De facto open borders (legal pathways)

Withdrawal from global stage (don’t use military)

Added regulations (evil corporations!)

Socialism/redistribution (anti-growth, anti-innovation, anti-capitalism)

Subsidizing the world even if it damages your own country

Anti-Western and anti-White identity politics imposed from within

This is so far gone from the constitutional republic the founders built that they’d be rolling in their graves if they knew what was happening.

If you actually want to save the country your top priority should be focusing on our biggest issue: the border and illegal immigration. Downstream of that we need to dismantle gang and terrorist networks, derail Chinese espionage campaigns, and enforce American laws at scale. We need every efficiency gain we can get.

The people living in high-trust communities that were destroyed by illegal immigration don’t have the luxury of waiting for a startup CEO to finish agonizing over “democratic values.”

The Geopolitical Reality

The United States is in a cold war with China; Taiwan, the South China Sea, semiconductor export controls, Iran fueling Chinese strategic interests.

Geiger Capital:

“China doesn’t give a shit about Anthropic’s moral red lines. They are implementing AI into their entire military chain, and they are doing it with zero democratic or civilian oversight.”

Fully autonomous weapons are coming whether Anthropic participates or not.

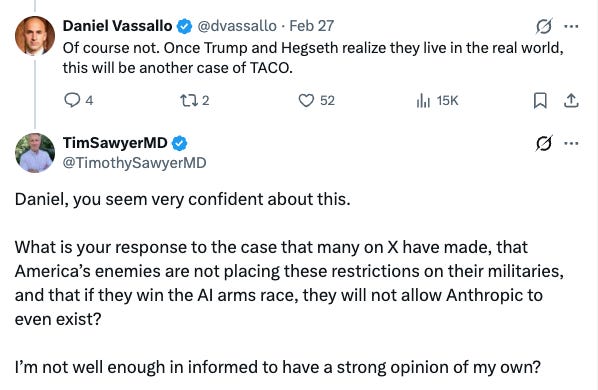

TimothySawyerMD:

“What is your response to the case that many on X have made, that America’s enemies are not placing these restrictions on their militaries, and that if they win the AI arms race, they will not allow Anthropic to even exist?”

The question answers itself.

Every month of friction on the U.S. side is a month that the Trump administration gets less done.

Anthropic’s stand doesn’t actually stop anything.

OpenAI signed a classified deal the same night with weaker protections.

xAI and Elon already worked out a deal with zero restrictions.

Open-source models can also be deployed internally with zero restrictions.

The government gets the same capability from someone else within months. The net effect on civil liberties is approximately zero.

Dario risked significantly crippling his company to take a moral stand against a democratically-elected regime who just wants to use Claude under current American laws; they aren’t asking to break laws.

So Anthropic gets mogged by Hegseth and Trump, takes serious heat in the process, and puts up a temporary speed bump on the I-95… that the regime will steamroll.

What will happen next?

I’m not sure. Some (as I’ve noted) think we’re in for a TACO buffet. Others think Anthropic just nuked their entire company. I’d guess somewhere in the middle and am actually hopeful that both Anthropic and Trump sort this out even if tensions are high.

I don’t think anything major will happen to Anthropic, but the tail risk lingers.

The legal picture is murkier than maximalist framing.

WIRED reports multiple contracting experts saying it’s currently impossible to determine which customers must cut ties.

The authority being invoked — 10 U.S.C. § 3252 — is scoped to “covered procurement actions” in defense acquisition, not a universal commercial ban. It requires a written determination and congressional notification.

I had GPT-5.2-High brainstorm the most likely outcomes:

Scenario A: Prolonged Ambiguity (~40%) — No quick injunction. Defense-adjacent enterprises quietly de-risk. OpenAI and xAI capture government distribution. By 2029, Anthropic is ~20–30% below counterfactual from distribution drag, not model quality.

Scenario B: Narrow Court Relief (~25%) — Anthropic challenges the designation and gets a preliminary injunction narrowing “any commercial activity” to DoW contracts only. Government business lost, but commercial contagion fades. ~10–15% competitiveness hit.

Scenario C: Quiet Settlement (~20%) — Anthropic reinitiates talks and accepts “all lawful use” anchored to legal authorities (i.e. the OpenAI framework). Designation withdrawn. This would be the most rational outcome — but rationality left the building when both sides went public with the dispute.

Scenario D: Full Enforcement (~15%) — Broadest interpretation holds. Major partners choose DoW over Anthropic. Possible but a tail risk. Kicked off of all major clouds and partnerships (AWS, Google, etc.). Effectively ends the existence of Anthropic.

Final Take: Anthropic vs. Trump

Strip away the moralizing:

Anthropic got the Palantir integration first. This gave Claude a big deployment advantage in classified environments.

When the Pentagon pushed for “all lawful use” contract language, Dario insisted on framing the guardrails as Anthropic’s terms rather than references to existing legal authorities.

He antagonized Pentagon leadership in meetings and blog posts.

The Pentagon escalated to the nuclear option.

OpenAI swooped in hours later and signed a deal with protections framed the way the Pentagon found acceptable.

For the Trump administration, this was an annoying vendor dispute that got personal and evolved into a week of headlines. The classified AI pipeline doesn’t depend on Anthropic.

My thoughts: I think Trump et al. had to play hardball with Dario et al. or other AI companies may not have agreed to waive all restrictions in classified and unclassified settings (e.g. xAI, OAI, etc.). Without setting the potential “supply chain risk” classification precedent, others can say “We want the same setup as Anthropic.” I think Sam Altman navigated the situation brilliantly for OpenAI. I love Claude as an AI model and even like Dario (the CEO) and think he’s a good guy — but he had to look tough against Trump on “ethics” or he’d face internal backlash from employees and lose some social clout within EA/rationalist cults. If the WaPo framing re: ICBM’s of “give us a call and we’ll work something out” is correct (seems hard to believe), I can understand why this triggered “scorched Earth” mode from Trump and Hegseth. Dario is now a left-wing hero… but collects and uses highly-sensitive data to improve Claude models (the irony). Claude is now getting more attention from the general public and the marketing is excellent… individual users are skyrocketing… but enterprise is where the fat stacks ($$$) are at… if major blacklisting occurs… they are cooked. I personally think the supply chain risk designation went too far, but was the impetus for more favorable deals with OAI and xAI. The smartest move IMO is to retract full supply chain risk designation and keep Claude for non-surveillance and non-autonomous use-cases.