Anthropic's "AI Mass Surveillance" Stand Doesn't Survive Scrutiny

Anthropic is already mass surveilling Americans to improve their models and "mass surveillance" isn't what you think.

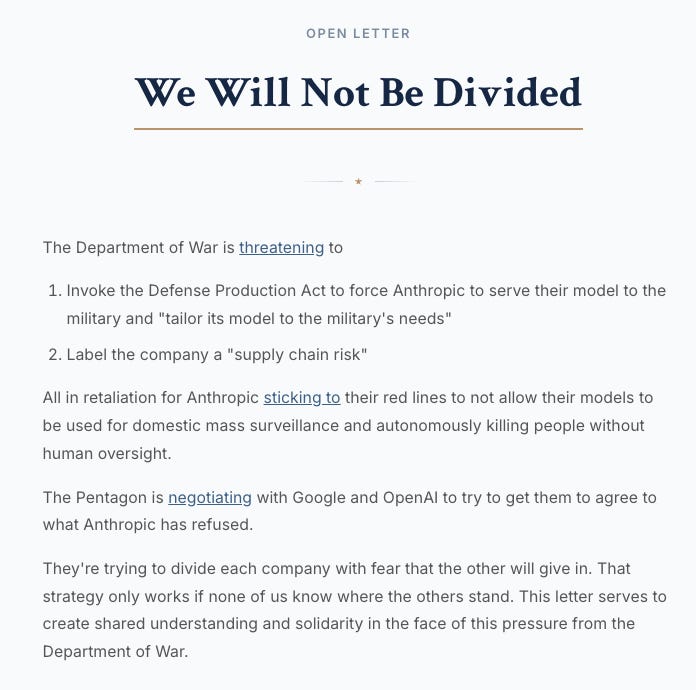

Anthropic CEO Dario Amodei refused the Pentagon’s demand to allow its AI model Claude to be used for “all lawful purposes,” citing concerns about mass domestic surveillance and fully autonomous weapons.

The Pentagon blacklisted Anthropic as a supply chain risk

Trump ordered all federal agencies to stop using Anthropic

OpenAI swooped in to sign a deal with the Pentagon almost immediately

Anthropic’s app shot to the top of the charts, the tech press championed Dario as a hero, open letters and petitions in support of Anthropic circulated, and employees posted about being on the “right side of history.”

But there's one major problem: the argument doesn't survive scrutiny.

Note 1: Claude is my current favorite AI model and I personally think Dario is well-intentioned even if I disagree with him.

Note 2: Anthropic’s other red line (fully autonomous weapons) is an equally incoherent argument but deserves separate treatment. This piece addresses the surveillance component.

What Is Dario Worried About?

In his public statement, Dario stated the following:

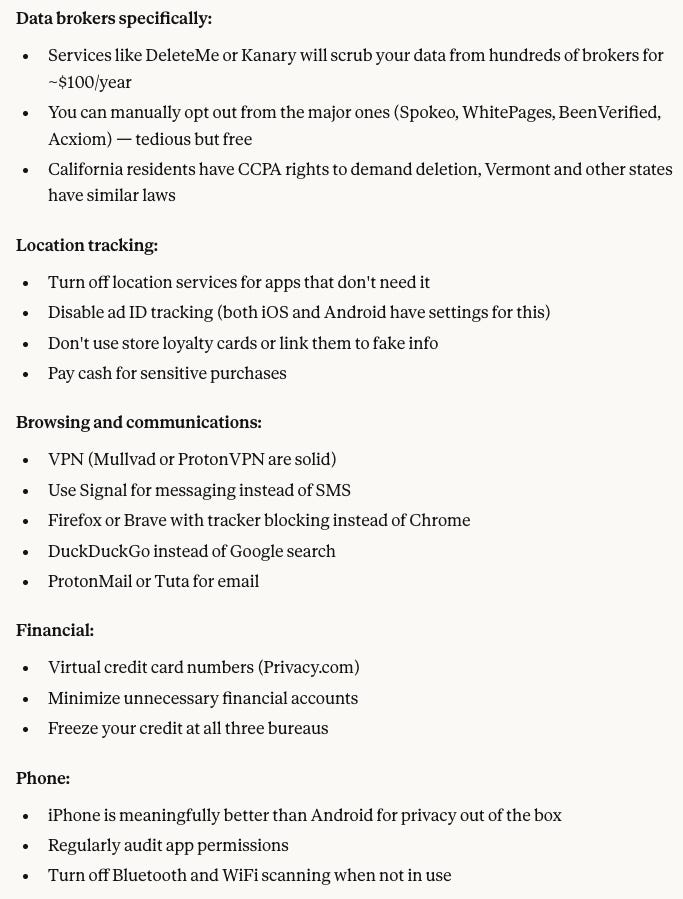

Under current law, the U.S. government can purchase detailed records of Americans’ movements, web browsing, and associations from commercial data brokers without obtaining a warrant.

The intelligence community has acknowledged this raises privacy concerns.

AI can now stitch this scattered, individually innocuous data into comprehensive profiles (automatically and at scale).

Dario believes the “law hasn’t caught up” with AI’s ability to synthesize commercially-available data. He wants contractual language preventing the Pentagon from using Claude for bulk collection and synthesis of Americans’ publicly available information.

The Pentagon refused, insisting on “all lawful purposes” — widely considered the status quo bare minimum for government contracts so they don’t get hamstrung by a subjective ToS in high-stakes operations.

“All Lawful Purposes” Is Not a Power Grab

An “all lawful purposes” clause doesn’t create new surveillance powers. It removes a private contractual veto over lawful government missions. The Fourth Amendment, FISA, EO 12333, DoD directives — all still apply.

This is a standard procurement baseline.

Vendors don’t get to decide which lawful missions the government can pursue. Without it, every AI company negotiates a different list of political ToS carve-outs, and procurement becomes hostage to the internal politics of private firms. If Dario thinks certain lawful practices should be banned, the remedy is legislation and litigation — not “Anthropic’s terms of service becomes national policy.”

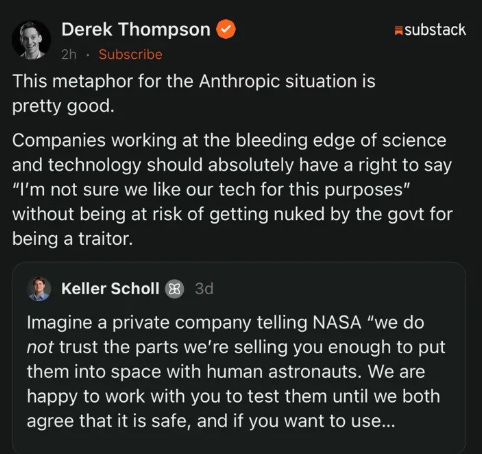

A trending analogy frames Anthropic as a parts supplier telling NASA “we don’t trust the parts we’re selling you.” This completely misunderstands the dispute.

Anthropic isn’t saying Claude doesn’t work — these are models they’ve tested, red-teamed, and shipped to millions of consumers. The dispute is about who gets to use it and for what. That’s the difference between Boeing saying “this airframe isn’t rated for that stress load” vs. “we don’t like who’s flying the plane.”

And the DoD has its own highly-rigorous evaluation pipelines (red teams, NIST, CDAO) — the subtext is “we don’t trust the government’s judgment,” which is absurd coming from a company that sells API access to anyone with a credit card.

“Mass Surveillance” Is Rhetorical Trickery

“AI-enhanced law enforcement with commercially available datasets to detect crime” sounds fairly reasonable and boring. “Mass domestic surveillance” sounds terrifying and dystopian. They describe the same activity.

When most hear “mass surveillance” they think 24/7 trackers on all Americans — an Orwellian panopticon where federal agents monitor your grocery runs and group chats in real time. That’s not even remotely close.

In practice: the U.S. government buys (1) commercially available data that (2) people voluntarily gave to apps and mega corps, then (3) runs it through AI synthesis and pattern recognition to identify criminal networks… that’s it.

The majority of government “mass surveillance” is uncontroversially beneficial:

Intelligence analysis

Fraud and scam detection

Tracking extremists with high odds of violence

Counterterrorism

Efficient policing

Uncovering child exploitation and human trafficking networks

Pinpointing fentanyl supply chains

Cyber defense

Identifying illegal immigrants

Hunting gang/cartel networks

Pinpointing espionage campaigns

Think about what opposing AI-enhanced data synthesis (i.e. “surveillance”) means:

Fentanyl. AI can cross-reference financial flows, communication patterns, and logistics data to map a distribution network in hours. Without AI, analysts sift through the same data for weeks or months. During that delay, people die — 80,000+ overdose deaths per year.

Child exploitation. NCMEC’s CyberTipline received 20.5 million reports in 2024. No human workforce can triage that volume. Every day an AI isn’t processing those reports is a day a child remains in an abusive situation because a lead sat in a queue.

Human trafficking. AI can identify patterns across hotel bookings, online ads, financial transactions, and movement data. The manual alternative means victims stay in captivity longer — or indefinitely.

Espionage. AI can link compromised devices, stolen-identity clusters, and laundering routes to identify foreign intelligence operations in hours instead of months.

Thinking that it’s evil or unconstitutional to buy publicly-available datasets and synthesize them with AI to catch criminals quicker is a ridiculous position.

Well it’s alright to catch criminals…

But hol’ up there buckaroo… not so fast!…

We’re gonna need you to use CTRL + F through 10000 page documents instead…

Scale is a feature, not a bug.

Obvious: Nobody should support 24/7 ultra-granular privacy-invading surveillance of U.S. citizens… but that’s already mostly illegal and not the debate here. The debate is public datasets being used to catch criminals more efficiently. And even with this use-case, if you think there are major privacy concerns, you could set up an anonymized Chainlink-type network with oracles that block out all personally-identifiable intel until enough “heat signals” or “red flags” are detected; if a threshold is passed only then can individuals be further investigated. Then individuals can be reanonymized into the system.

And if we ever got to the point where legal “mass surveillance” via bulk public datasets is significantly more damaging than beneficial, it would mostly reflect erosion of human capital — not a flaw in the tool itself. Liberia has a constitution modeled after the United States. How similar do you think Liberia functions to the United States?

Constitutions and laws don’t matter unless you have people willing to enforce and abide by them.

With high human capital in government, AI surveillance (i.e. data synthesis) is an amplifier of effective law enforcement.

With low human capital, no anti-surveillance law protects you — they’ll just ignore it and do what they want… courts end up corrupted and there’s no backlash anyway.

The Math Makes This Embarrassing

Baseline harms are so large that even microscopic improvements justify “AI surveillance” (in ways that the government can legally do it).

GAO estimates annual federal fraud losses at $233B–$521B. A 1% reduction is $2.3B–$5.2B per year.

CDC reports 80,391 overdose deaths in 2024. Using the DOT’s $13.7M value of a statistical life, a 0.1% reduction (~80 lives) is worth ~$1.1B per year.

FBI IC3 reports internet crime losses exceeding $16B. FTC reports consumer fraud losses above $12.5B.

IBM reports AI in cybersecurity saved $1.9M per breach and reduced breach lifecycle by 80 days.

IRS estimates a $696B gross tax gap. A 1% improvement is ~$6B–$7B per year.

Conservative combined annual benefit: $11B–$15B. Base case: $20B–$30B+. At mature deployment: $40B+.

Even if you assign a $500B cost to a catastrophic misuse scenario, the base case annual benefit (~$25B) means you’d need a 5% annual probability of every institutional check simultaneously failing for the EV to flip negative. Most honest people would put that well under 1%.

I had GPT-5.4-Pro build a full EV ledger: steady-state net EV of $41.9B/year, 2026 realized of $23.3B, probability net positive 88% — and it leaves child exploitation, counterintelligence, and military ISR completely unpriced.

Follow this logic to its conclusion: if the net effect is asymmetrically favorable, then opposing it is de facto pro-crime.

Even if not the intention, the consequence is that criminal networks get more time to operate and victims wait longer for rescue (assuming they get rescued).

The “Slippery Slope” and “Loyalty Scores”

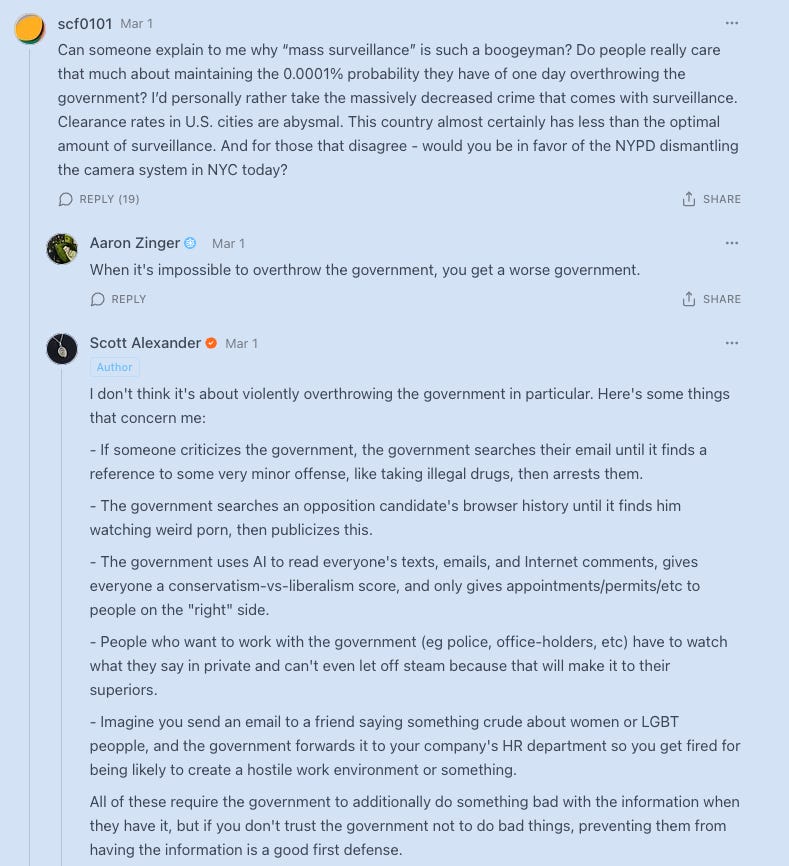

Scott Alexander wrote a thorough legal explainer concluding that AI could theoretically be used to build a citizen “loyalty score.” And I appreciate his arguments.

If “mass surveillance” is used the way Scott implies, then I’m firmly on his side and there’s zero disagreement.

But every scenario he described involves private communications not in commercially available datasets:

Emails/texts: Protected by the Fourth Amendment and Stored Communications Act. Require warrants.

Browser history: Some browsing metadata is sold by brokers (the NSA has purchased it), but Scott’s scenario of searching a specific person’s browser history to find dirt is targeted surveillance requiring individualized access — not bulk pattern matching on commercially available data.

Private speech: Requires a Title III wiretap order — one of the hardest things to get in U.S. law.

Intercepting email: Federal crime under 18 U.S.C. § 2511.

The “all lawful purposes” debate is about commercially available broker data — location, purchase patterns, app usage. None of Scott’s scenarios involve that data. He’s arguing against surveillance that would already be illegal, which “all lawful purposes” would NOT authorize because it’s... not lawful.

Even the illegal version would require the whole chain to hold: a president orders it, nobody leaks it, no court strikes it down, no Congress investigates, no journalist exposes it, and the public doesn’t revolt. All in a country that couldn’t keep the NSA’s programs secret despite classification. The FBI’s 278,000 improper FISA searches were caught and improper queries dropped from 57,094 to 5,518 in one year.

Trump has had the FBI, NSA, CIA, and the entire military apparatus at his disposal for over a year. He hasn’t arrested journalists or “disappeared” political opponents. If a truly corrupt leader wanted a surveillance state, they wouldn’t care about Anthropic’s contract terms — they’d use other vendors, open-source models, or just ignore the fine print.

Following laws is downstream of human capital, not downstream of Anthropic’s ToS.

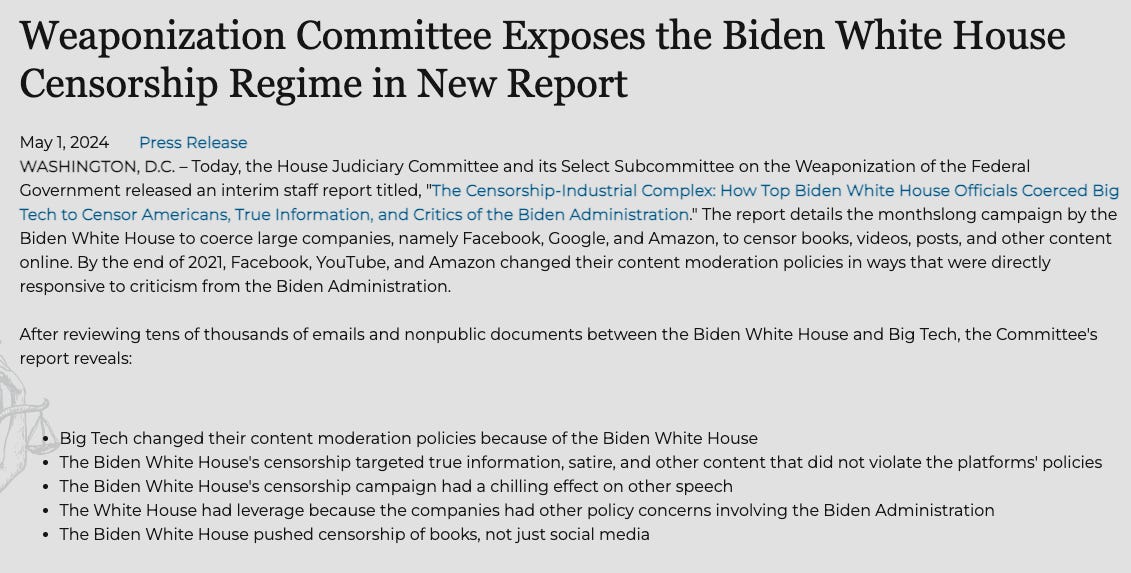

And here’s the thing people forget: you can already silence political adversaries and manipulate public discourse without AI. We literally just watched it happen.

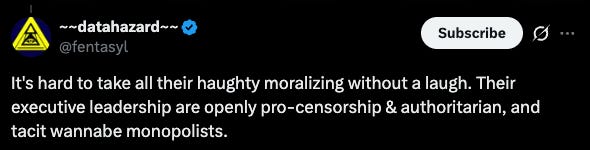

The Biden administration pressured Facebook, Google, YouTube, and Twitter to censor conservative speech, suppress the Hunter Biden laptop story, and remove COVID dissent — what the House Judiciary Committee documented as the “Censorship Industrial Complex.”

The Twitter Files confirmed it. Missouri v. Biden litigated it. A federal judge called it “an almost dystopian scenario” resembling “an Orwellian Ministry of Truth.” No AI was needed. No “mass surveillance” contract clause was involved. The government just picked up the phone. Social credit scores, political targeting, speech suppression — none of these require AI. They require willingness. If the people in power want to do it, they’ll do it with or without Claude.

If “the government might theoretically do something terrible with this tool” is sufficient to withhold it, why give the government access to any databases, computers, or forensic labs? Microsoft Excel is a threat to Americans’ privacy because now the government can process data faster! Calculators are downright unconstitutional… we can add criminal damages faster than ever before!

Dario’s Stand Doesn’t Change Surveillance

The most practically damning problem: it accomplishes nothing at least in the near-term (it did generate a broader convo and serious momentum). And the cost-benefit calculus was not attempted and most still aren’t thinking critically about legal “mass surveillance.”

The NSA, ICE, DHS, FBI keep purchasing data regardless of whether Anthropic approves. The only question is which AI model analyzes it.

Net effect on surveillance? Zero.

Net effect on Anthropic? Potentially very negative; supply chain risk designation and federal bans could cost enterprise revenue and jeopardize cloud provider relationships.

Dario is not stopping surveillance at the moment — he’s just making it temporarily more painful while the government transitions to another AI provider.

Foreign Adversaries and Criminals Are Buying + Synthesizing the Same Data!

China, Russia, Iran, and North Korea are not following Anthropic’s code of ethics or U.S. law. They buy datasets, hack emails, extract phone data, and leverage whatever they can — then synthesize with private AIs.

Criminals do the same with jailbroken open-source models.

The ODNI’s declassified report explicitly notes this data is available to foreign governments.

Dario is arguing that the one entity with democratic accountability, legal constraints, judicial oversight, and a constitutional obligation to protect Americans should operate with blinders on.

Everyone else inevitably gets full power.

The U.S. government is being pressured to do it the slow way because most Americans don’t understand how legal “mass surveillance” works.

The correct move is probably the inverse: give the U.S. government the best possible tools to counter foreign surveillance and criminal exploitation that’s already happening. Elderly people are hounded from scam centers in India and Cambodia; the gov should be getting ahead of this stuff.

If Dario and Anthropic really care about privacy… they should advocate for the following:

Ban or sharply restrict the private brokerage of bulk sensitive data and data collection by all companies… OR (if the market is allowed to exist)

Permit tightly audited government AI use rather than forcing the U.S. government to fight with worse tools than criminals and foreign adversaries.

DOJ’s own final rule exists because foreign adversaries can use bulk American data for espionage, coercion, blackmail, and to enhance AI and military capabilities. Blocking audited U.S. government AI analysis of that same data preserves the danger while crippling the defense.

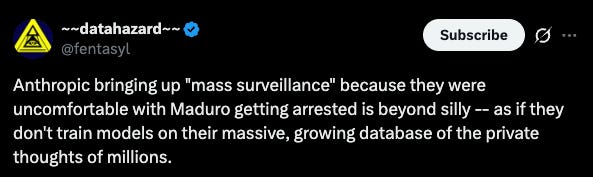

The Anthropic Hypocrisy (And the Broader Absurdity)

“He told me: ‘I think the worst part of the Cosby thing was the hypocrisy’.” — Norm MacDonald

Anthropic built its models on massive web-scraped datasets — much of it without explicit consent of creators. It collects conversations from millions of users covering deeply personal topics: health, relationships, finances, mental health. It builds persistent user memory profiles across conversations.

All of this powers a $380B+ company (as of 2026). Anthropic claims commercial products are no-train by default, but the broader data ecosystem they operate in — scraping, retention, memory — is itself a form of mass data collection far more intimate than what brokers sell.

People tell Claude things they wouldn’t tell anyone.

Queries like: “I’m being treated for X-Y-Z health condition, what should I do?” and “how do I handle my divorce?” are far more intimate than what you’d get from an online data broker.

But zoom out from Anthropic specifically.

The broader argument being made is:

It’s fine for companies — with zero democratic accountability, zero judicial oversight, no FOIA obligations, hackable infrastructure, and no constitutional constraints — to collect and retain intimate data from hundreds of millions of Americans. Most users don’t even understand what they’re giving away. Nobody reads terms of service. These companies get breached routinely. The data ends up with brokers, criminals, and foreign adversaries.

But it’s unconscionable for the democratically elected government — with inspectors general, congressional oversight, FISA courts, and the entire chain of command — to efficiently analyze commercially available data to catch criminals.

If you genuinely believe data collection is dangerous, the target should be: (A) companies collecting it with minimal accountability (e.g. absurdly small legal settlements) AND (B) data brokers — not the government analyzing what’s already out there with maximum accountability.

You are literally implying that entities with no oversight should have the data but the entity with the most oversight shouldn’t.

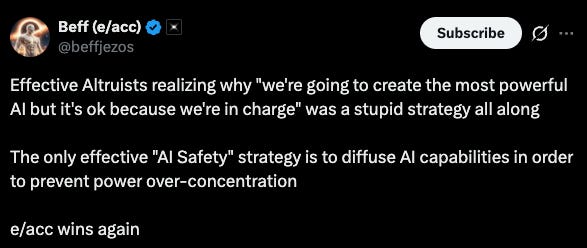

U.S. Law and Democracy vs. EA Luxury Beliefs

Trump won the 2024 presidential election and his administration has democratic legitimacy to enforce existing U.S. law. Dario wants his personal preferences (and those of fellow EAs) to reshape U.S. law.

This isn’t their first crack at it — they lobbied hard for AI safety laws with NIST and the AI Safety Institute, endorsed California’s SB 53, and submitted OSTP recommendations for mandatory model evals.

If Trump wouldn’t have pushed back, all AI labs would have to meet an insane safety threshold set by Anthropic — monopoly via regulatory capture.

A CEO is free to walk away from a contract. But it is absurd to pretend his terms of service should function as de facto public law while the broker market, the legal authorities, and substitute models all remain available.

Some perceive this as a highly partisan act even if the intent was apolitical. The “restrictions” on usage happen to align with exactly what would help the agenda of most Democrats who want to:

Make it harder to track illegal immigrants and criminals

Increase degree of difficulty for deportations

Prevent the government from identifying fraud and circular NGO schemes

Inhibit the government’s ability to arrest criminals and gangs

Weaken the U.S. military’s global competitiveness

The Fourth Amendment protects against unreasonable search and seizure, not against the government being efficient at legally authorized activities.

Nobody argued that the government getting faster computers in the 90s was unconstitutional.

And the data broker concern Dario cited depends almost entirely on Americans voluntarily giving their data to apps.

Most people don’t bother with basic digital hygiene — which tells you how much the average American actually cares about their data until they hear “mass surveillance” via the government; they rarely think about “mass surveillance” by tech companies.

People who bear the cost of slower law enforcement are never the ones making the decision. Dario and fellow EA cult leaders don’t live in neighborhoods ravaged by illegals, homelessness, Tren de Aragua gangs, and fentanyl.

It’s easy to be principled about handicapping the U.S. government when you have nothing but luxury beliefs and are inside a “diverse” high IQ tech bubble.

What’s Really Going On Here?

Dario is smart enough to understand this and I think if you pressed him on the EV calculus, he’d be willing to admit that it’s likely asymmetrically favorable for the safety and security of the U.S.

I think he’d also acknowledge that some competitor will fill the “public data synthesis” gap since Anthropic won’t allow it… and that foreign adversaries will still use this capability to surveil U.S. citizens and gain a surveillance edge over the U.S. gov in some ways if this becomes illegal under U.S. law.

If the concern is illegal surveillance — I’m 100% on his side.

If it’s synthesizing public datasets that would be synthesized anyway in order to catch criminals more efficiently, I’m nowhere near his side.

Unwillingness to compromise in gov contract negotiations is likely related to Anthropic’s employee base: heavily EA-aligned, Bay Area, overwhelmingly progressive.

If Dario had accepted “all lawful purposes,” he’d potentially face internal revolt from employees who view any Trump cooperation as “morally” and “ethically” toxic.

And Trump likely had to escalate with Anthropic — as letting them off without consequence sets a precedent where every AI company bakes political exceptions into government contracts.

The tail risk for Anthropic is cloud providers: AWS and Google Cloud have massive government contracts they won’t jeopardize.

Dario’s likely betting Trump moves on and from a marketing and recruitment perspective, he couldn’t have scripted it better: a Bay Area tech founder gets to refuse a Republican president, dress it up as principled, and watch app store rankings and social status spike.

But none of that changes the consequentialist reality on the ground:

The government will still buy and analyze bulk data; just with a potentially worse AI model

Criminals and foreign adversaries will continue to exploit the same datasets with zero restrictions

Every harm that AI surveillance could help prevent: fraud, scam centers, trafficking, fentanyl networks, espionage — continues at full speed (or faster because they are using AI to synthesize this data)

Anthropic, the data brokers, and every tech company that scraped the web to build their models continue operating exactly as before

If Dario genuinely cared about privacy, he’d be advocating for zero data access and full anonymization as standard practice across all companies, banning data brokers entirely, and sandboxing all user data from corporate use.

Instead he’s imposing a contractual veto on the one entity with democratic accountability while the actual privacy violations — the broker market, the scraping, the breaches, the foreign exploitation — continue untouched.

The world where the U.S. government has access to the best AI tools for law enforcement is almost certainly better than the world where it doesn’t.

The rationalists don’t have a rational argument here.