Why Micron Is Down 31% After Its Best Quarter Ever — HBM, TurboQuant, and Whether It Comes Back

Analyzing the forces behind Micron's recent selloff.

Micron just posted $23.86 billion in quarterly revenue — nearly tripling year-over-year — with $12.20 non-GAAP EPS against a $9.31 Street estimate and 74.9% non-GAAP gross margin.

It guided fiscal Q3 to $33.5 billion of revenue at roughly 81% gross margin.

The stock then fell as much as ~31% from its March 18 high of $471.34 — bottoming near $323 intraday before rebounding to ~$338 by month-end.

The reality: Micron is tanking because the market is attacking the duration of a memory windfall, not because Micron stopped printing near-term numbers.

This is not one bad headline. It’s six or seven negative catalysts stacking within a two-week window, hitting a momentum name that had already priced in excellence.

What caused Micron to sell off?

Tier 1: The Core Repricing

1. Peak-Margin / Cycle Fear From Micron’s Own Spending Ramp (~30%)

This is the single biggest driver. Micron’s numbers are exceptional, but the market is already looking past them.

The company lifted FY2026 capex above $25 billion and said FY2027 capex will step up meaningfully again, with construction-related capex expected to rise by more than $10 billion year over year. In memory stocks, that reads as “today’s shortage can become tomorrow’s glut.” The multiple compresses before the earnings do.

Memory has a brutal cyclical history. Every previous supercycle ended with companies investing at the peak, supply overshooting demand, and margins cratering. The market has been burned by this exact pattern with MU multiple times. When it sees $25B+ stepping up to $35B+, the reflex is to pull forward the next-cycle debate — even if current supply remains structurally constrained.

The bull counter: Micron’s management said on the Q2 call that customers are receiving only 50–67% of their memory requirements and that supply constraints persist beyond 2026, with meaningful new capacity not online until FY2028 at the earliest. The capex is responding to confirmed demand, not speculating on future demand. But the market doesn’t wait for FY2028 to price the risk.

2. TurboQuant and the Broader AI-Efficiency / Memory-Displacement Narrative (~25%)

On March 24, Google Research published TurboQuant — a compression algorithm achieving at least 6x reduction in KV-cache memory for large language models with zero measurable quality loss. The stock dropped 3.4% on the news and hasn’t recovered.

What the market heard: “AI needs 6x less memory.”

TurboQuant targets the KV cache — a specific data structure used during inference — and Google says it helps vector search.

Where the market overreaches: jumping from “one inference-memory bottleneck gets more efficient” to “HBM scarcity and memory demand broadly collapse.”

BofA’s Vivek Arya noted that comparable compression techniques have existed since 2024 without altering hardware procurement at scale. Google itself is guiding $175–185B in 2026 capex — up ~100% YoY — which contradicts the idea that the company publishing this paper believes it reduces the need for memory hardware.

TurboQuant is also not the only thing feeding this narrative. MatX raised $500M in February to build a custom LLM chip claiming 10x better training performance than NVIDIA GPUs, using a hybrid SRAM-HBM architecture.

Etched raised $500M at a $5B valuation the month before with a similar pitch. Groq and Cerebras have been making SRAM-first designs for years. The narrative that the industry is actively trying to displace HBM has real startups with real money behind it.

But the narrative runs straight into Jevons paradox: in a market where customers are receiving half of what they need, making memory more efficient doesn't reduce demand — it reallocates it.

Memory freed up by compressing inference doesn’t sit idle.

It gets absorbed by the next workload in a queue that stretches years into the future:

A training run that was waiting for capacity

RAG deployment that couldn’t scale

Longer context window that wasn’t feasible before

Agentic systems that need persistent state

When you’re only serving 50–67% of customer requirements, there is no scenario where making one workload more efficient results in less total HBM being consumed. The backlog absorbs everything.

And every startup trying to work around the memory bottleneck confirms that memory is the bottleneck. MatX’s chip combines on-chip SRAM with HBM sourced from the same memory makers that supply NVIDIA. Its closest competitor Etched plans to source memory wafers from SK Hynix, Samsung, and Micron. These chips don’t displace memory demand — they add more buyers to a market where the existing buyers already can’t get enough.

More fundamentally: you can optimize inference all you want, but breakthroughs at the frontier — longer context, multimodal, world models, whatever comes next — require more raw memory.

Efficiency gains at the inference layer fund the next generation of training at the frontier layer. Memory gets reallocated upward in the stack, not returned.

Per-GPU memory content has grown roughly 12x from the A100’s 80GB to over 1TB projected for Rubin Ultra. As SemiAnalysis put it, modern AI follows a “memory-Parkinson” dynamic where architectures “relentlessly grow to occupy whatever HBM becomes available.”

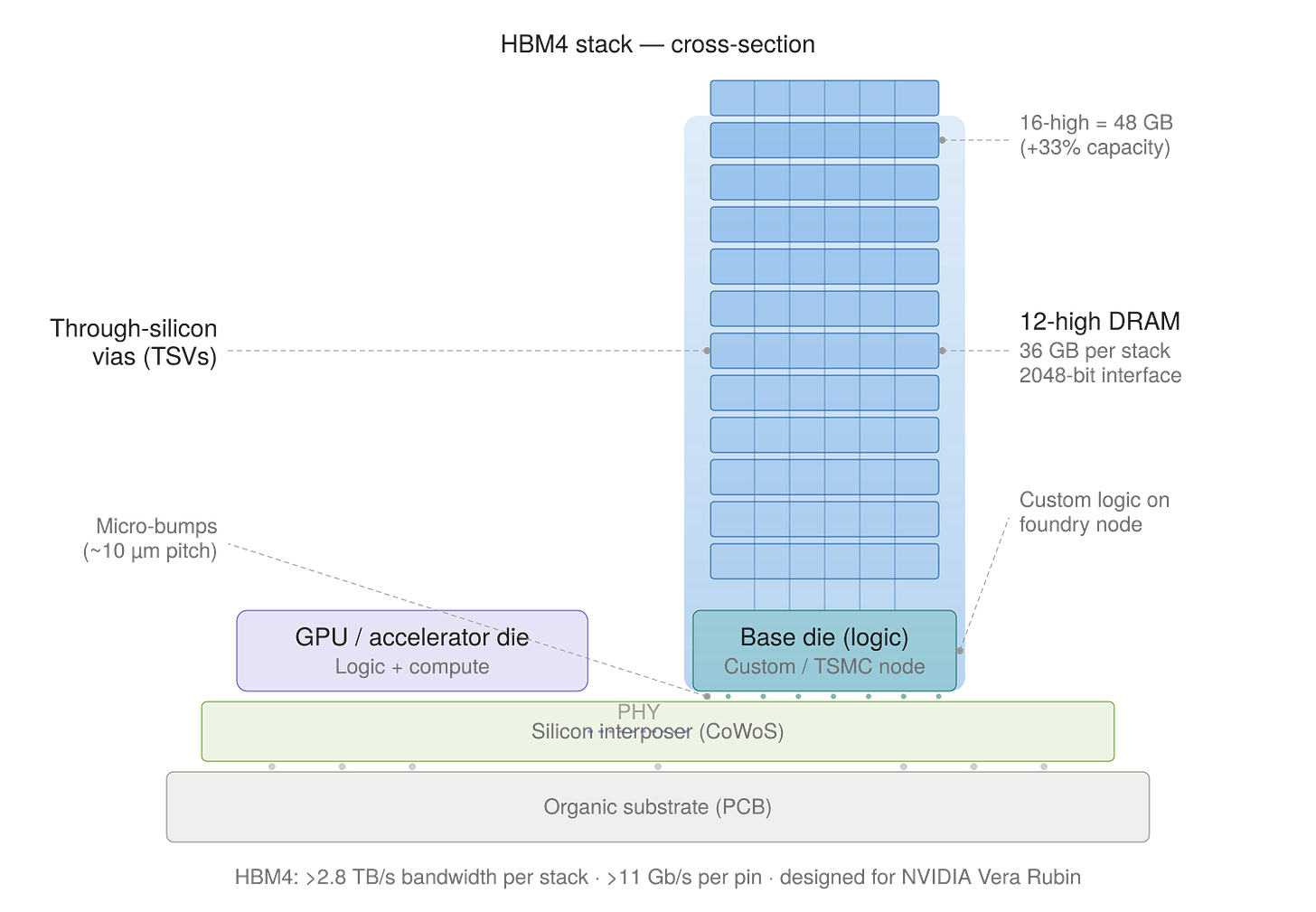

Micron is already shipping HBM4 36GB 12-high stacks in volume for NVIDIA Vera Rubin at >2.8 TB/s bandwidth, and has shipped 16-high (48GB) samples delivering a 33% capacity increase per placement — the next step in a trajectory that only demands more memory per chip generation, not less.

The only scenario where total memory demand actually declines is if efficiency gains outpace both the existing unmet demand backlog and the growth in new workloads simultaneously. Given that the backlog alone represents 33–50% of unmet requirements, that’s essentially impossible in the 2026–2028 timeframe.

None of this stops the stock from de-rating now. The paper was tested only on 8B parameter models, hasn’t been deployed at production scale, and will be formally presented at ICLR in April. In a fear environment, it provided the intellectual justification for selling — and that’s enough. But the fundamental demand case hasn’t changed.

3. Macro Risk-Off: War, Oil, Yields, AI-Financing Stress (~20%)

This isn’t stock-specific, but it’s the foundation layer everything else sits on. Reuters tied the current tech selloff to the Iran war, oil above $100, volatile yields, and skepticism about whether Big Tech can comfortably finance a $635 billion 2026 AI-infrastructure buildout under worsening macro conditions.

Oil above $100 revives recession fears, hitting cyclical sectors first — and memory is the most cyclical part of semiconductors in the market’s mental model. Nikkei dropped 4%, KOSPI dropped 4.5%, yields rose, and the Fed-cut narrative evaporated. The Strait of Hormuz closure disrupted shipping lanes and input costs for Asian fabs.

This is the environment in which every other catalyst gets amplified. TurboQuant in a calm market is a one-day dip. TurboQuant layered on top of a war, rising yields, and AI-financing skepticism is a week-long cascade.

Tier 2: Real But Secondary

4. Mainstream DRAM Price Softness (~10–15%)

This is the factor most MU bulls are underweighting because they’re focused on HBM.

Micron is not a pure HBM proxy. In fiscal Q2, cloud revenue was $7.75 billion, mobile/client was $7.71 billion, and core data-center was $5.69 billion. The mobile/client segment is a huge chunk of revenue and it’s exposed to commodity DRAM pricing.

Citi noted mainstream DDR5 16GB DRAM spot prices are down ~6% since Micron reported. Even if HBM remains tight, a stock that was pricing extraordinary scarcity across the entire memory stack will react hard to any hint that conventional DRAM pricing has peaked.

The risk: if spot-price weakness leaks from the commodity market into contract pricing over the next 1–2 quarters, it creates a margin headwind that the HBM business (while growing fast) may not fully offset.

HBM is the growth story, but DRAM is still the revenue base.

5. Competition: SK Hynix and Samsung (~10%)

SK Hynix remains the dominant HBM supplier (50–57% market share depending on the quarter) and filed a confidential SEC submission for a US listing that could raise $10–14B.

This matters on multiple levels: it gives US investors a directly investable alternative to MU for AI memory exposure, capital goes directly into AI expansion, and analysts noted the listing enables direct valuation comparisons that may highlight SK Hynix’s “undervaluation despite stronger profitability.”

Samsung is also recovering. Its co-CEO said customers told him “Samsung is back” on HBM4, its entire 2026 HBM4 production is sold out, and it’s committing $73B to semiconductor investment in 2026.

A projected HBM market share shift — from ~62/21/17 (SK Hynix/Micron/Samsung in mid-2025) toward 50/30/20 — means Micron’s relative position faces more competitive pressure even as the market grows.

That said, the SK Hynix listing cuts both ways.

If SK Hynix lists at a higher multiple than MU (which analysts expect) — it could actually re-rate MU upward by establishing a higher valuation benchmark for the entire AI memory sector.

None of this means Micron loses near-term demand. But it reinforces the market’s fear that supernormal margins will attract aggressive supply response.

6. Stargate / Abilene / OpenAI LOI Drama (~5%)

Bloomberg reported Oracle and OpenAI cancelled plans to expand the Abilene Stargate campus from 1.2GW to 2.0GW. Combined with reporting that Samsung and SK Hynix had signed letters of intent (not binding purchase orders) with OpenAI, this created a “demand destruction” narrative.

The 600MW expansion was dropped due to financing disputes and shifting demand forecasts — not declining AI demand. The broader 4.5GW Oracle-OpenAI agreement remains intact. NVIDIA deposited $150M with Crusoe to secure the site for Meta. Capacity is being reallocated, not destroyed.

Micron’s disclosed customer commitments are broader than any single OpenAI project. In December the company said it had completed agreements on price and volume for its entire calendar-2026 HBM supply, and in March it announced its first-ever five-year strategic customer agreement. The guide doesn’t depend on Stargate.

This is a narrative amplifier — not a core driver.

Tier 3: Noise the Market Is Treating as Signal

7. ARC-AGI-3 and “AI Isn’t That Smart” Sentiment (~2–3%)

ARC-AGI-3 launched March 25 — inside the selloff window. Frontier models scored under 1% (Gemini 3.1 at 0.37%). Humans score 100%. The headline: “Best AI in the world can’t do what any human can.”

This doesn’t logically affect HBM demand, which is driven by the current generation of models scaling inference — not by AGI timelines. But in a fear environment, “AI scores 0.37% on intelligence test” gives emotional permission to question the entire buildout thesis. It compounds the TurboQuant narrative: maybe AI needs less memory because maybe AI isn’t progressing as fast as the capex assumes.

This is a vibes-level catalyst, not a fundamental one. But vibes move stocks during panics.

8. Helium Supply Crisis (~2% of the current move, but significant tail risk)

Iran struck Qatar’s Ras Laffan Industrial City — roughly 30% of global helium production, now offline with damage that could take years to rebuild. Helium is irreplaceable in semiconductor fabrication: it cools wafers during lithography, flushes toxic residue, and is essential for EUV leak detection. South Korea imported 64.7% of its helium from Qatar in 2025. Spot prices have surged 40–100%.

This matters structurally but isn’t driving the current MU drawdown. Korean chipmakers reportedly have helium inventories through approximately June 2026. It’s a tail risk, not a present trigger.

The paradox: if the helium crisis extends beyond 6 months, it constrains production industry-wide — which actually validates the supply-shortage thesis and protects memory pricing. The market isn’t parsing this yet.

Also underreported: every HDD at 10TB+ uses helium as a sealed internal gas. Seagate and Western Digital have sold out 2026 production. If the helium crisis constrains HDD capacity, it could become a tailwind for Micron’s SSD business.

9. Clay, NY Lawsuit and Leftist Political Overhang (~1%)

In January 2026, Jobs to Move America and a local residents’ coalition filed suit to halt construction of Micron’s $100B Clay, New York fab, challenging the environmental review process. A deeper investigation found the lead plaintiff group had never held a meeting before the suit was filed and some members didn’t even know who the others were.

The most recent New York news was actually positive — state approval for a key power-line project and Micron saying ground preparation is ahead of plan. Background noise. Doesn’t affect 2026–2027 revenue trajectory.

10. YMTC Patent Suit, “Sharks,” and Assorted Noise (<1%)

YMTC’s London patent suit is part of the ongoing US-China tech rivalry and is not a new development. As for institutional manipulation: David Tepper increased his Appaloosa MU position by 200% in Q4. The analyst consensus target is $515–530 with 38 buys against 2 sells. Smart money may be buying the dip, but “sharks intentionally forcing it down” requires position and flow data that doesn’t exist publicly. A crowded AI trade unwinding on bad narrative timing is sufficient to explain this move.

The Structural Bull Case for Micron in 2026

The piece so far has been a fear catalog. Here’s what the fear is colliding with.

Memory is the bottleneck — and it’s getting worse, not better.

Data centers are projected to consume 70% of all high-end memory chips produced worldwide in 2026, up from 20–30% as recently as 2022.

That’s a permanent reallocation of global supply toward AI, per IDC. AI servers use 10–20x more memory per unit than traditional servers. TrendForce projects memory prices rising another 70% in 2026 due to persisting shortages.

The supply response can’t catch up fast enough. New fab capacity takes 3–5 years to build. Global DRAM supply growth in 2026 is projected at just 16% year-over-year — well below historical norms and well below demand growth.

The 3 companies that control ~90% of the market (Samsung, SK Hynix, Micron) have all shifted production toward higher-margin HBM and enterprise DDR5, further starving consumer electronics, automotive, and everything else. The WSJ compared the emerging memory shortage to the automotive production delays during COVID.

NVIDIA’s roadmap shows per-GPU memory content growing roughly 12x from the A100’s 80GB to over 1TB for Rubin Ultra.

Every next-generation GPU platform requires more memory than the last.

The architectural trajectory is unambiguously memory-intensive.

The broader memory market reflects this. TrendForce projects total memory market revenue at $551.6B in 2026, surging to a peak of ~$842.7B in 2027.

DRAM contract prices rose 90–95% QoQ in Q1 2026 — an extraordinary move even by memory-cycle standards.

SemiAnalysis, which has been calling the shortage since late 2024, argues the supply-demand imbalance is “deteriorating rather than normalizing” and believes the market is “not even close to the peak.”

As established above: when customers can only get half of what they need, efficiency gains don’t destroy demand — they reallocate it. The fear narrative requires demand destruction. The data shows unmet demand growing.

Micron’s HBM4 execution is better than the market thinks.

In late January, SemiAnalysis reduced Micron’s projected share of NVIDIA Rubin HBM4 to zero, citing poor pin speed performance from Micron’s internal base die design. They projected Rubin HBM4 would consolidate into ~70% SK Hynix / ~30% Samsung. The stock dipped on the leak.

Six weeks later, at GTC 2026, Micron announced high-volume production of HBM4 explicitly “designed for NVIDIA Vera Rubin” — achieving over 11 Gb/s pin speeds (the threshold NVIDIA required), delivering >2.8 TB/s bandwidth, and shipping 16-high samples.

Either SemiAnalysis was wrong about the exclusion, Micron fixed the problem faster than anyone expected, or Micron’s HBM4 is going to non-NVIDIA customers who are equally hungry. Regardless, the bear narrative on Micron’s HBM competitiveness was overtaken by events before the selloff even began — and the market hasn’t priced that in because TurboQuant and the war buried the signal.

Micron also supplies LPDDR5X for the Vera CPU on Rubin platforms and announced the industry’s first PCIe Gen6 data center SSD in volume production — broadening its footprint across the entire Vera Rubin memory and storage stack, not just HBM.

US policy is directly subsidizing Micron’s expansion.

The One Big Beautiful Bill Act, signed July 4, 2025, delivered multiple tailwinds to the US semiconductor industry simultaneously. Domestic R&D costs can be fully expensed in the year incurred again, reversing the TCJA’s 5-year amortization requirement.

The Section 48D advanced manufacturing investment credit for semiconductor fabs rose from 25% to 35%. And 100% bonus depreciation was made permanent.

These provisions make Micron’s $25B+ capex commitment look more rational than the raw number suggests. The after-tax cost of domestic expansion is meaningfully lower than the headline figure, and the policy environment actively incentivizes exactly the kind of investment Micron is making.

Micron has also disclosed eligibility for up to ~$6.165B in CHIPS Act grants. The cycle-fear argument treats capex as pure risk; the tax code treats it as subsidized strategic investment.

This also matters for the competitive landscape: SK Hynix’s Indiana packaging plant benefits from the same incentives. Yet Micron — while it manufactures globally across Singapore, Japan, and Taiwan — is the only one of the Big Three domiciled in the US and is making its largest domestic expansion in company history with the $100B Clay, NY fab.

Micron Stock: ATH Odds & Timelines

These are judgment calls, not model output. The key insight: time helps the recovery case only up to a point. The same new fabs and packaging capacity that support Micron’s long-run growth also raise the risk that 2027–2028 becomes more about supply normalization than scarcity.

Will MU revisit the $470 high?

By June 2026 (~15% odds): Requires war resolution, no hyperscaler capex cuts, and a rapid sentiment reversal. Three months is very fast for a 45% recovery from here.

By March 2027 (~50% odds): Requires Q3 earnings confirming the $33.5B guide, at least one more quarter of sustained HBM demand, and macro stabilization. This is the realistic base case for recovery.

By March 2028 (~60% odds): More time, but also more supply coming online. The cycle-turn risk offsets the longer runway.

Will MU clear $500 (new ATH)?

By June 2026 (~5% odds): Near-impossible without a major positive catalyst — war ceasefire, blowout Q3, and HBM4E NVIDIA qualification all in the same quarter.

By March 2027 (~35% odds): Requires the full bull case: AI memory supercycle validated across multiple quarters, Micron maintains or grows HBM share, conventional DRAM pricing stabilizes.

By March 2028 (~45% odds): Plausible if the HBM4/4E generation delivers and competition doesn’t compress margins faster than the TAM grows.

The reason these odds don’t explode higher with time: Micron and its rivals are now spending so aggressively — SK Hynix bought $8B in ASML EUV machines, Samsung is spending $73B in 2026 alone, and Micron is at $25B+ stepping up to $35B+ — that investors are pulling forward the next-cycle debate. The supply normalization risk in 2027–2028 is real and partially offsets the longer recovery runway.

What Actually Matters Next

Signals that MU recovers:

Microsoft, Amazon, Alphabet, and Meta keep 2026 AI-capex plans intact through Q2 earnings

Micron keeps repeating that supply-demand conditions remain tight beyond 2026

Conventional DRAM spot weakness stays confined to spot markets and doesn’t leak into contract pricing

HBM4 for Vera Rubin is already in volume production; HBM4E qualification for Rubin Ultra on schedule would extend the runway

War de-escalation or ceasefire reduces oil/helium/macro risk simultaneously

SK Hynix US listing creates a sector re-rating rather than rotation out of MU

Signals that the bear case is right:

Any Big 4 hyperscaler cutting 2026 AI capex guidance

DDR5 contract pricing rolls over, compressing Micron’s blended margins

Samsung HBM4 takes significantly more than projected 30% market share

Helium crisis extends beyond 6 months and forces industry-wide production curtailments

A second major AI efficiency breakthrough beyond TurboQuant that actually reaches production deployment and demonstrably reduces hardware procurement

Bottom Line: Micron (MU) in March 2026

Even after a partial rebound to ~$338, MU is trading around 4–5x NTM P/E — compressed even by memory-stock standards.

The bull case: Micron is still guiding record Q3 revenue, says AI and traditional server demand remain constrained by inadequate supply, has begun HBM4 volume production explicitly for NVIDIA Vera Rubin (overcoming a SemiAnalysis exclusion call from six weeks earlier), and is expanding domestic production with enhanced tax incentives that reduce the after-tax cost of its capex ramp.

The bear case: Micron and its rivals are spending so aggressively on new capacity that investors are pulling forward the cycle debate — and conventional DRAM pricing is already softening.

The $33.5B in guided revenue seems legitimate for the next couple of quarters, but many question whether supernormal margins can be sustained.

Short-term traders are avoiding due to war, DRAM spot prices softening, and Twitter threads about compression algos and Stargate funding. I’d guess most likely a Jevons paradox effect in HBM emerges and HBM stocks including MU bounce back.

The thing that actually matters: whether HBM demand and hyperscaler capex hold — hasn't broken.

Every major customer is still spending, Micron's HBM order book is contracted through 2026, and 70% of high-end global memory production is being consumed by an AI buildout that every major company on earth is accelerating, not slowing down.

DISCLAIMER: NOTHING HERE IS FINANCIAL OR INVESTMENT ADVICE.